Implementing a Continuous Security Model in the Public Cloud with Jenkins and Terraform: Part-2

In my previous blog, we looked at different types of violations associated with Cloud Governance and Compliance and talked about “Continuous Security Model”. We also explored how policies should be designed and what it means to detect and remediate violations.

A quick overview of Continuous Security Model -

1. Goal is to build Security Checks into the CI/CD process

2. Define policy to evaluate configurations and other events

3. If violations are found, communicate this to people responsible for the app/infra, and then remediate it

4. Continuously gather feedback from the pipeline to identify trend in violations, if any, then update your policies

While a “Continuous Security Model” pipeline should include security tools from various stages of application development lifecycle, like Static/Dynamic Code analysis (SAST/DAST), container image vulnerability scan tools, configuration scans, compliance and governance tools etc., I will be focusing on the compliance and governance part.

The process would be same even for other tools mentioned above. It’s always better to create smaller feedback loops in any DevOps process than having to wait for the entire application to be deployed before identifying the issues. This allows to fix the problem at an earlier stage.

In this blog, as promised, I will show you how to build a “Continuous Security Model” using Jenkins and Terraform. Terraform and Jenkins are used for deployment at a rapid pace. It would be a logical extension to these tools to include into this model.

You can also use the Jenkins Pipeline built in this blog for just Continuous Deployment with Terraform.

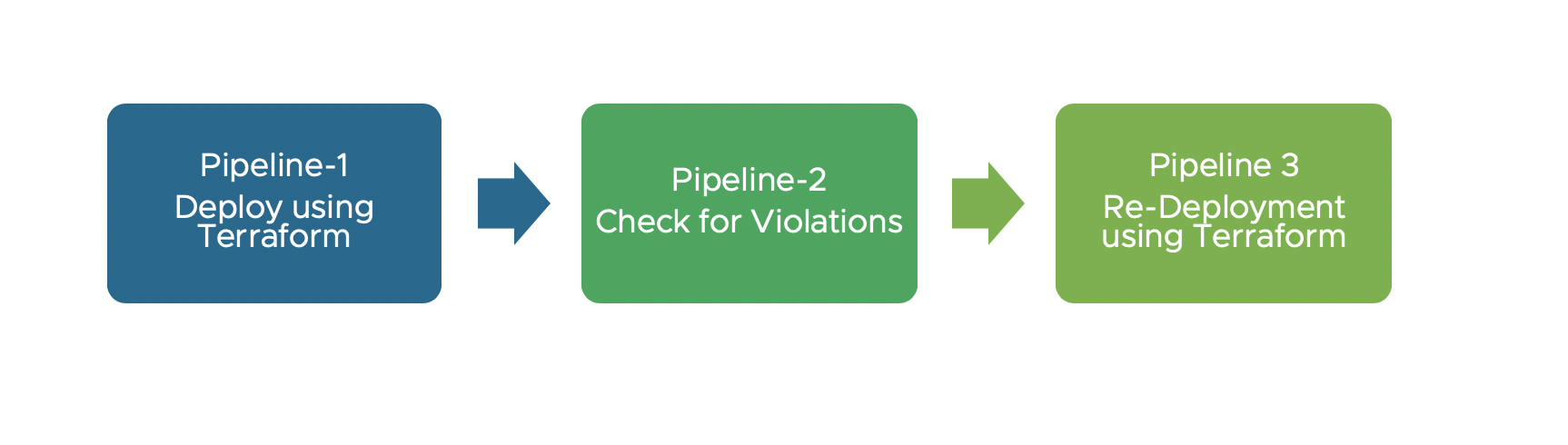

Moving on. All of these jobs will be of the type “Pipeline” in Jenkins. Here is a high-level overview of what we will be configuring in this blog.

1. Pipeline 1 - Deploy using Terraform (with S3 as Terraform backend)

2. Pipeline 2 - Check for Violations (Validate against policy)

3. Pipeline 3 - Re-Deploy using Terraform (If policy violated then trigger it)

4. Pipeline 4 - Terminate/Destroy infra using Terraform

We will first deploy AWS infrastructure with Terraform and then check if any of those resources violated policy constraints. If they did violate, we will either re-deploy the infrastructure to fix violations or we can opt to terminate it.

Configuring Jenkins Pipelines

We won’t be using the Terraform Jenkins Plugin because it hasn’t been updated since 2016. It does not have additional configuration parameters for using Terraform with Backends like S3. We will be using JenkinsFile instead.

Pre-requisite Jenkins Plugin :

Here is a list of Jenkins Plugins that need to be installed before starting the configuration.

MANDATORY:

1. Copy Artifact Plugin

2. Copy Data to workspace Plugin

3. Credential Plugin

4. Credential Binding Plugin

5. Pipeline Plugin

6. Workspace Clean up Plugin

OPTIONAL:

1. Slack Notification Plugin

2. Blue Ocean Plugin

Pipeline 1 - Deploy using Terraform

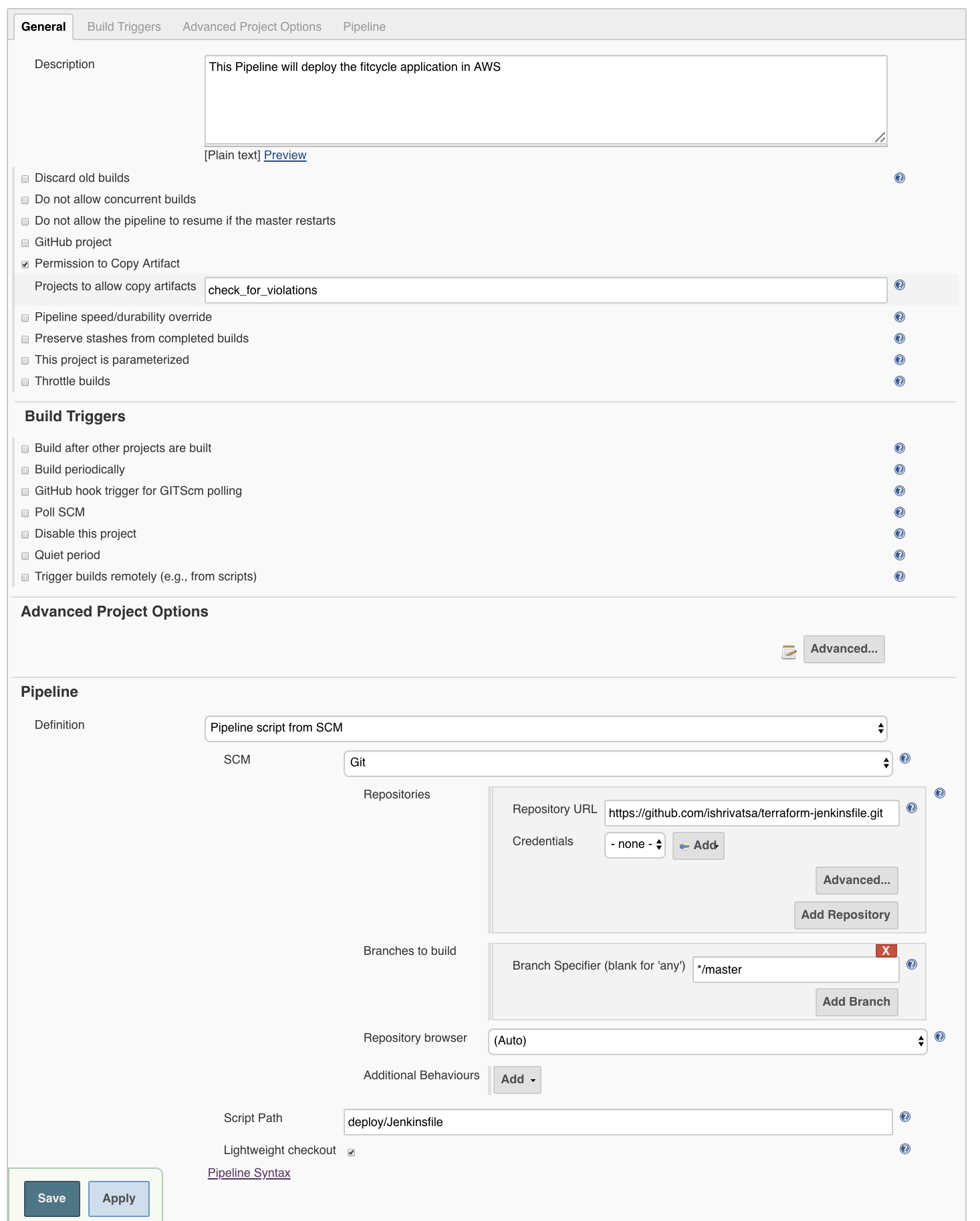

Configure the Jenkins Job as shown below. This pipeline uses Jenkinsfile to describe the various stages.

It’s a best practice to store the Jenkinsfile in a git repository. Jenkinsfile can be stored either in the same repo as the Terraform/Ansible templates or in a separate Repo.

I will be using different repos for Terraform files and Jenkinsfile.

1. The first stage is ‘Preparation’. This involves sending a slack message that notifies the user that the pipeline has been triggered. In the same step we also clone the repository that contains the terraform files.

stage('Preparation') {

steps {

slackSend color: "good", message: "Status: DEPLOYING CLOUD INFRA | Job: ${env.JOB_NAME} | Build number ${env.BUILD_NUMBER} "

git 'https://github.com/ishrivatsa/demo-secure-state.git'

}

}

2. Stage 2 is Installing all the dependencies for Terraform.

stage('Install TF Dependencies') {

steps{

sh "sudo apt install wget zip python-pip -y"

sh "curl -o terraform.zip https://releases.hashicorp.com/terraform/0.12.5/terraform_0.12.5_linux_amd64.zip"

sh "unzip terraform.zip"

sh "sudo mv terraform /usr/bin"

sh "rm -rf terraform.zip"

}

}

3. Stage 3 is when the terraform code is “deployed” (apply command)

stage('Apply') {

environment {

TF_VAR_option_5_aws_ssh_key_name = "adminKey"

TF_VAR_option_6_aws_ssh_key_name = "adminKey"

TF_VAR_option_1_aws_access_key = credentials('ACCESS_KEY_ID')

TF_VAR_option_2_aws_secret_key = credentials('SECRET_KEY')

AWS_ACCESS_KEY_ID= credentials('ACCESS_KEY_ID')

AWS_SECRET_ACCESS_KEY= credentials('SECRET_KEY')

AWS_DEFAULT_REGION="us-west-1"

}

steps {

sh "cd fitcycle_terraform/ && terraform init --backend-config=\"bucket=BUCKET_NAME\" --backend-config=\"key=terraform.tfstate\" --backend-config=. \"region=us-east-1\" -lock=false && terraform apply --input=false --var-file=example_vars_files/us_west_1_mysql.tfvars --auto-approve"

sh "cd fitcycle_terraform && terraform output --json > Terraform_Output.json"

}

}

The variables needed to be passed to Terraform files can either be statically defined in ".tfvars” file or they can be set as env variables or as a switch (–var) with terraform apply command

Use the Jenkins credentials plugin to set the Access and Secret Key.

Notice, that in the terraform command, I am using “backend-configuration”. The backend configuration instructs Terraform to store the ".tfstate” at another location like S3 bucket, consul etc, which acts like the source of truth. It also enables teams to collaborate on the same infrastructure.

This is the RECOMMENDED way to operate with Terraform.

Another advantage of this method is that it allows us to pass/copy “.tfstate” to another pipeline that may use it to either modify existing infra or terminate it entirely.

In this stage, we also store the details like Object ID, Name, etc about the resources that were deployed by Terraform to a file in .json format (ex: Terraform_Output.json). Use the artifact plugin to store this file as an artifact.

4. Finally, in the post actions, we can send the status as a slack notification.

Final Pipeline script

pipeline {

agent any

stages {

stage('Preparation') {

steps {

slackSend color: "good", message: "Status: DEPLOYING CLOUD INFRA | Job: ${env.JOB_NAME} | Build number ${env.BUILD_NUMBER} "

git 'https://github.com/ishrivatsa/demo-secure-state.git'

}

}

stage('Install TF Dependencies') {

steps{

sh "sudo apt install wget zip python-pip -y"

sh "curl -o terraform.zip https://releases.hashicorp.com/terraform/0.12.5/terraform_0.12.5_linux_amd64.zip"

sh "unzip terraform.zip"

sh "sudo mv terraform /usr/bin"

sh "rm -rf terraform.zip"

}

}

stage('Apply') {

environment {

TF_VAR_option_5_aws_ssh_key_name = "adminKey"

TF_VAR_option_6_aws_ssh_key_name = "adminKey"

AWS_ACCESS_KEY_ID= credentials('ACCESS_KEY_ID')

AWS_SECRET_ACCESS_KEY= credentials('SECRET_KEY')

}

steps {

sh "cd fitcycle_terraform/ && terraform init --backend-config=\"bucket=BUCKET_NAME\" --backend-config=\"key=terraform.tfstate\" --backend-config=\"region=us-east-1\" -lock=false && terraform apply --input=false --var-file=example_vars_files/us_west_1_mysql.tfvars --auto-approve"

sh "cd fitcycle_terraform && terraform output --json > Terraform_Output.json"

}

}

}

post {

success {

slackSend color: "good", message: "Status: PIPELINE ${currentBuild.result} | Job: ${env.JOB_NAME} | Build number ${env.BUILD_NUMBER}"

archiveArtifacts artifacts: 'fitcycle_terraform/Terraform_Output.json', fingerprint: true

archiveArtifacts artifacts: 'violations_using_api.py', fingerprint: true

}

failure {

slackSend color: "danger", message: "Status: PIPELINE ${currentBuild.result} | Job: ${env.JOB_NAME} | Build number ${env.BUILD_NUMBER}"

}

aborted {

slackSend color: "warning", message: "Status: PIPELINE ${currentBuild.result} | Job: ${env.JOB_NAME} | Build number ${env.BUILD_NUMBER}"

}

}

}

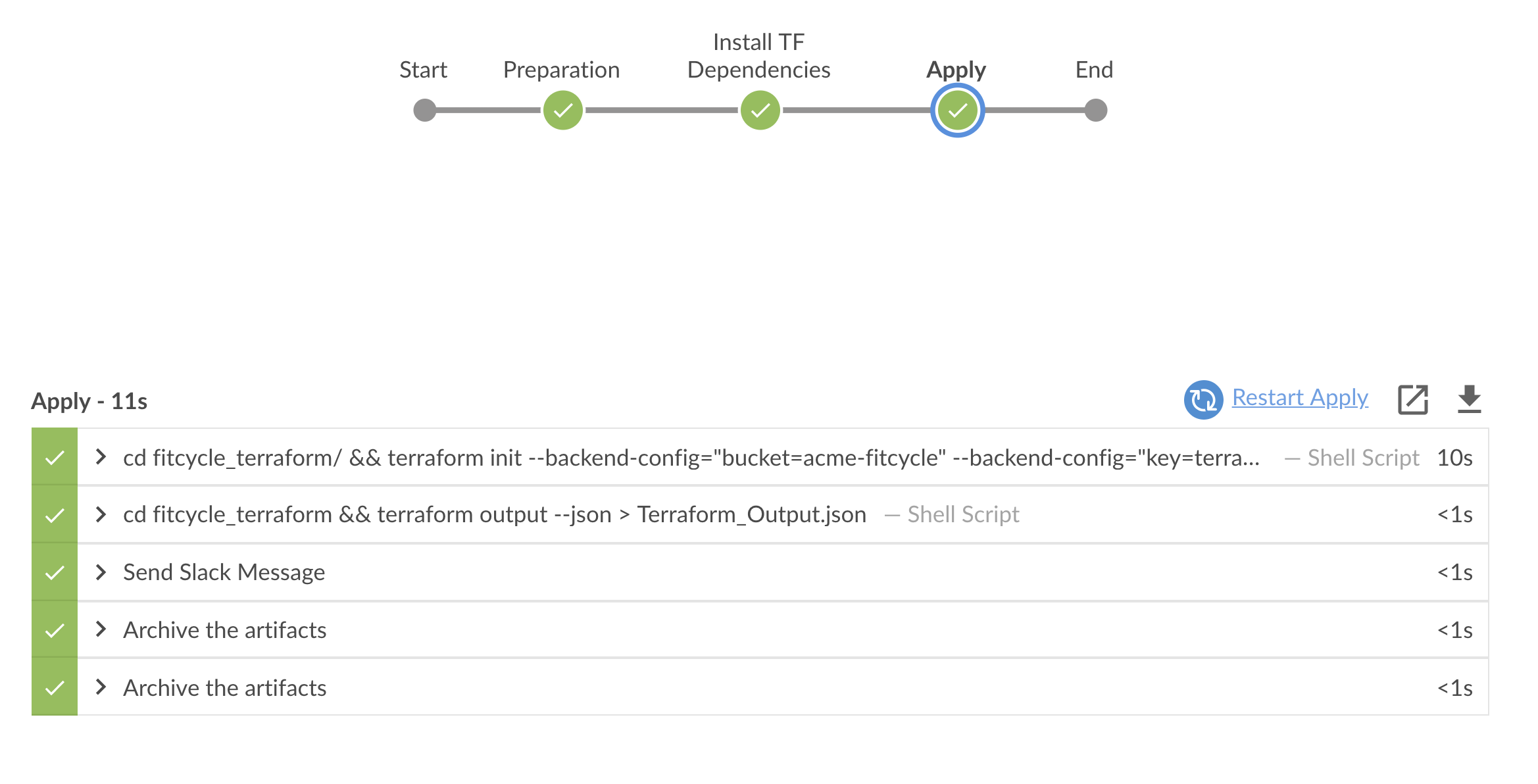

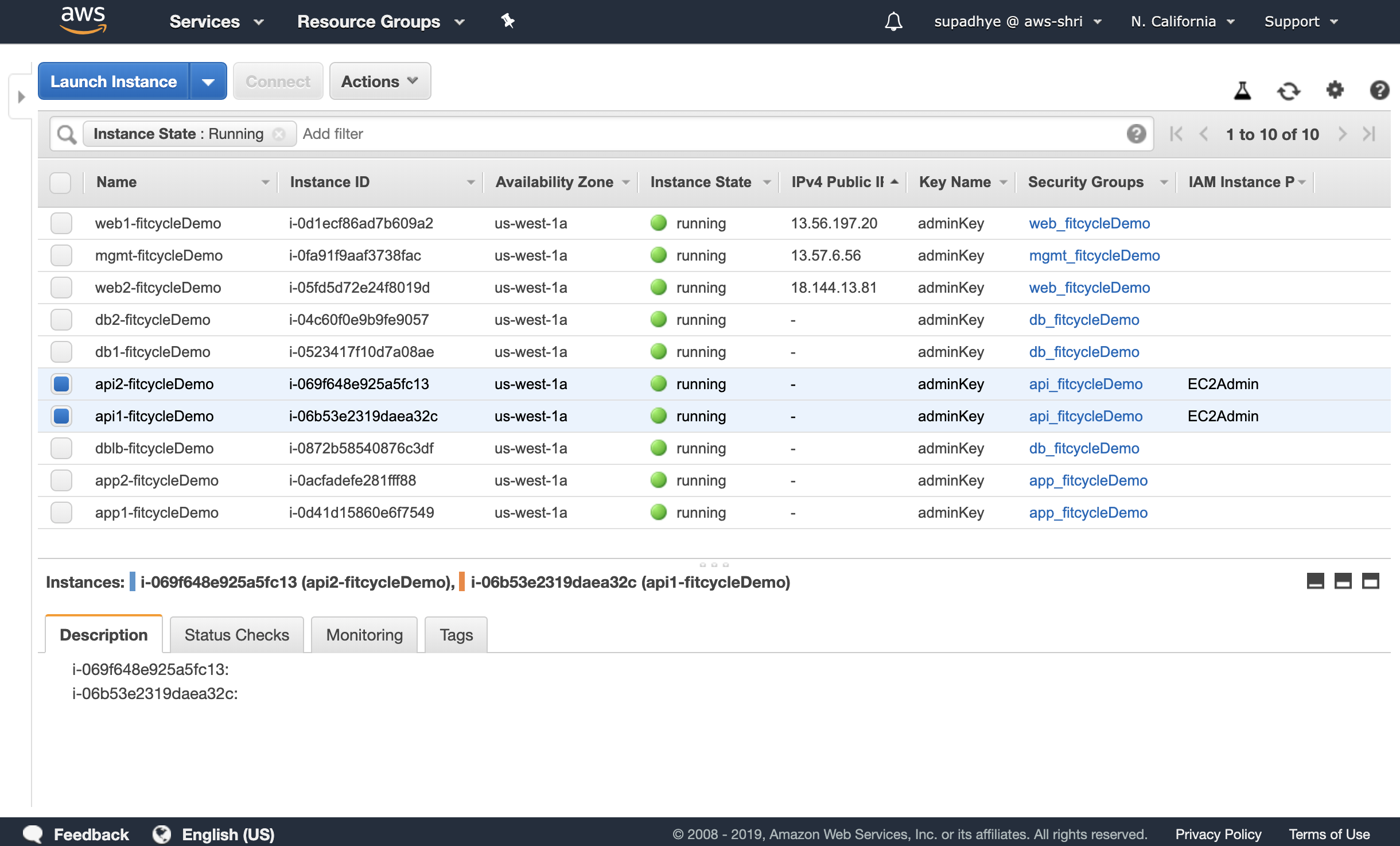

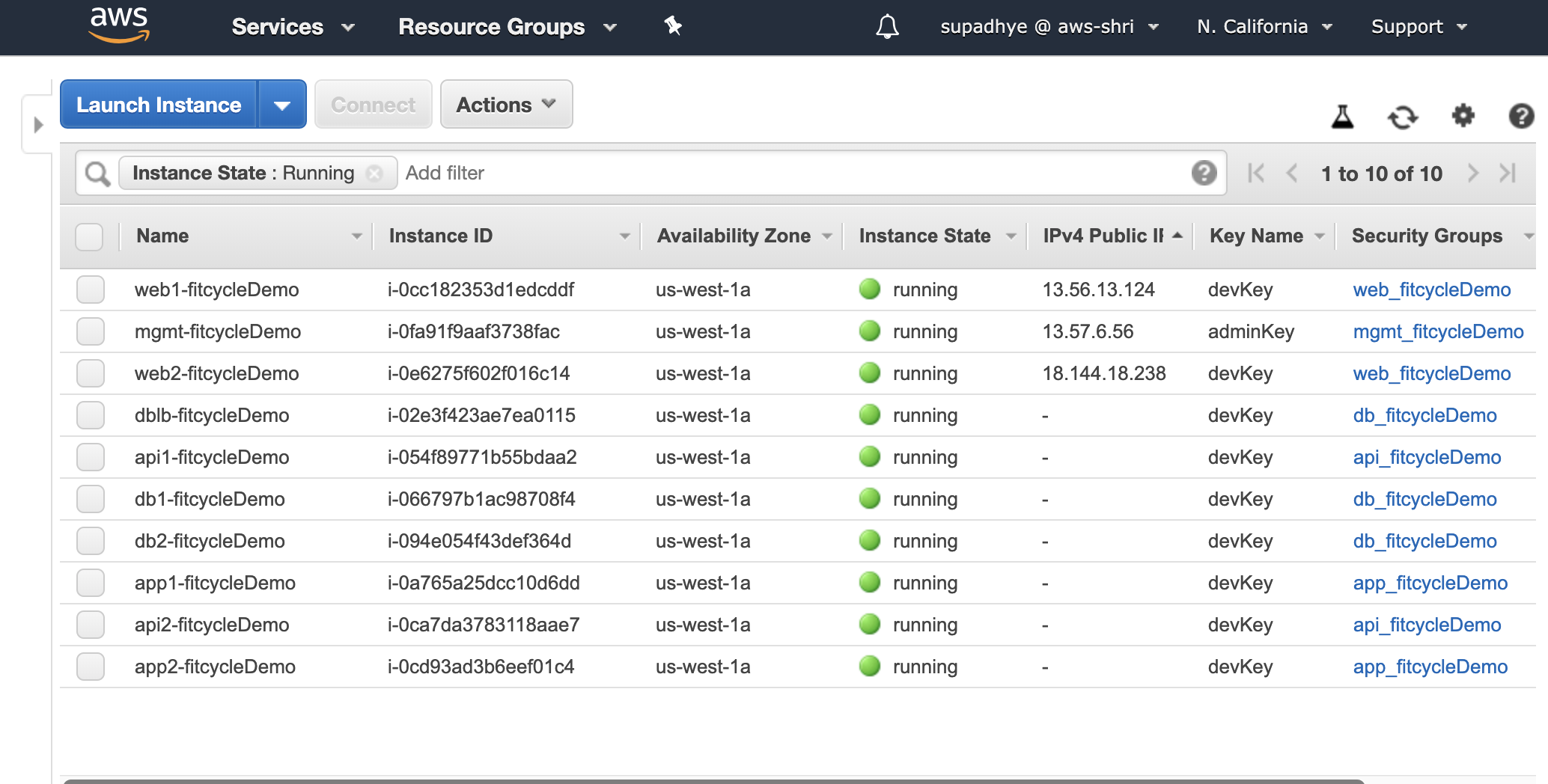

You should expect a state similar to the one shown below, after the execution is completed successfully.

This terraform template deploys 10 instances. Two of these instances are private and have “EC2Admin” IAM Profile attached to them.

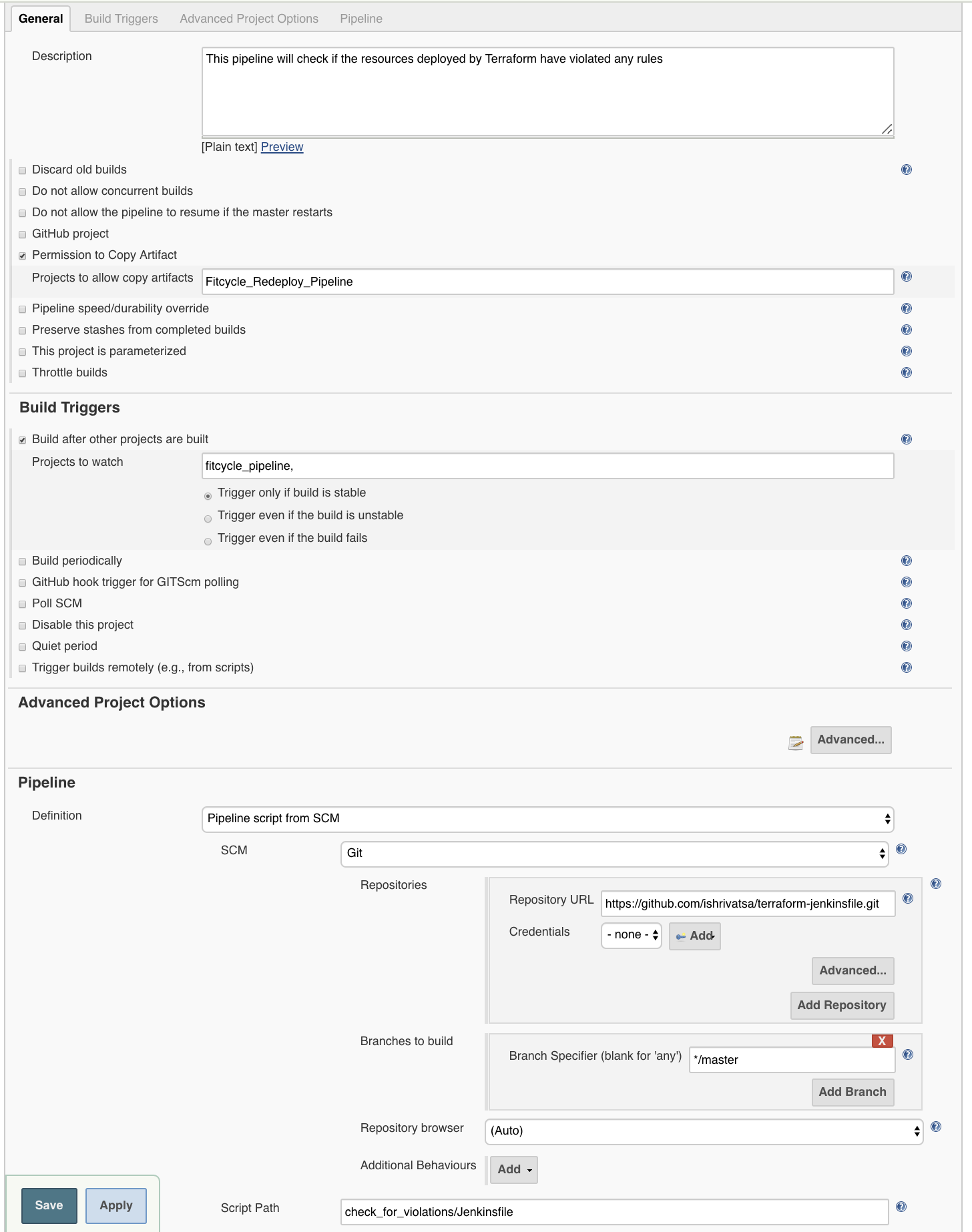

Pipeline 2 - Check for Violations

The next Jenkins pipeline will check for violations, if any, that are generated by the security and compliance tool against the resources that were deployed in the previous pipeline (Look for Terraform_Output.json from previous stage). This is where we leverage the concept of policies. I have described the constraint within the violations.py script. (See 3 below)

The Jenkinsfile for this pipeline can be found here

Stages:

1. Install VMware Secure State Dependencies - Install the CLI/SDK for security and compliance tool of your choice

2. Copy Artifacts - Copy the artifacts like Terraform output file from deployment pipeline. Also, copy any scripts that you may have to checking violations.

3. Check for Violations - I will be using a custom script “violations.py”. It’s a simple python script that checks for violations of all resources deployed by terraform against the data from the security tool. You may have to modify this script or write your own for additional policies. In this example, the policy has a constraint which forbids sharing of SSH-Key with a instance that has Admin Policy attached to it. This is eqivalent to “Investigate” step of the Response Process.

4. Verify - If any violating objects are found then the output is set to True else False. This flag can be used to send a slack notification and also to automatically trigger another de-deployment pipeline, if a violation was found.

Both VMware Secure State and Jenkins will send us slack notification with details about violations and pipeline status. This maps to the “Communicate” step of Response Process.

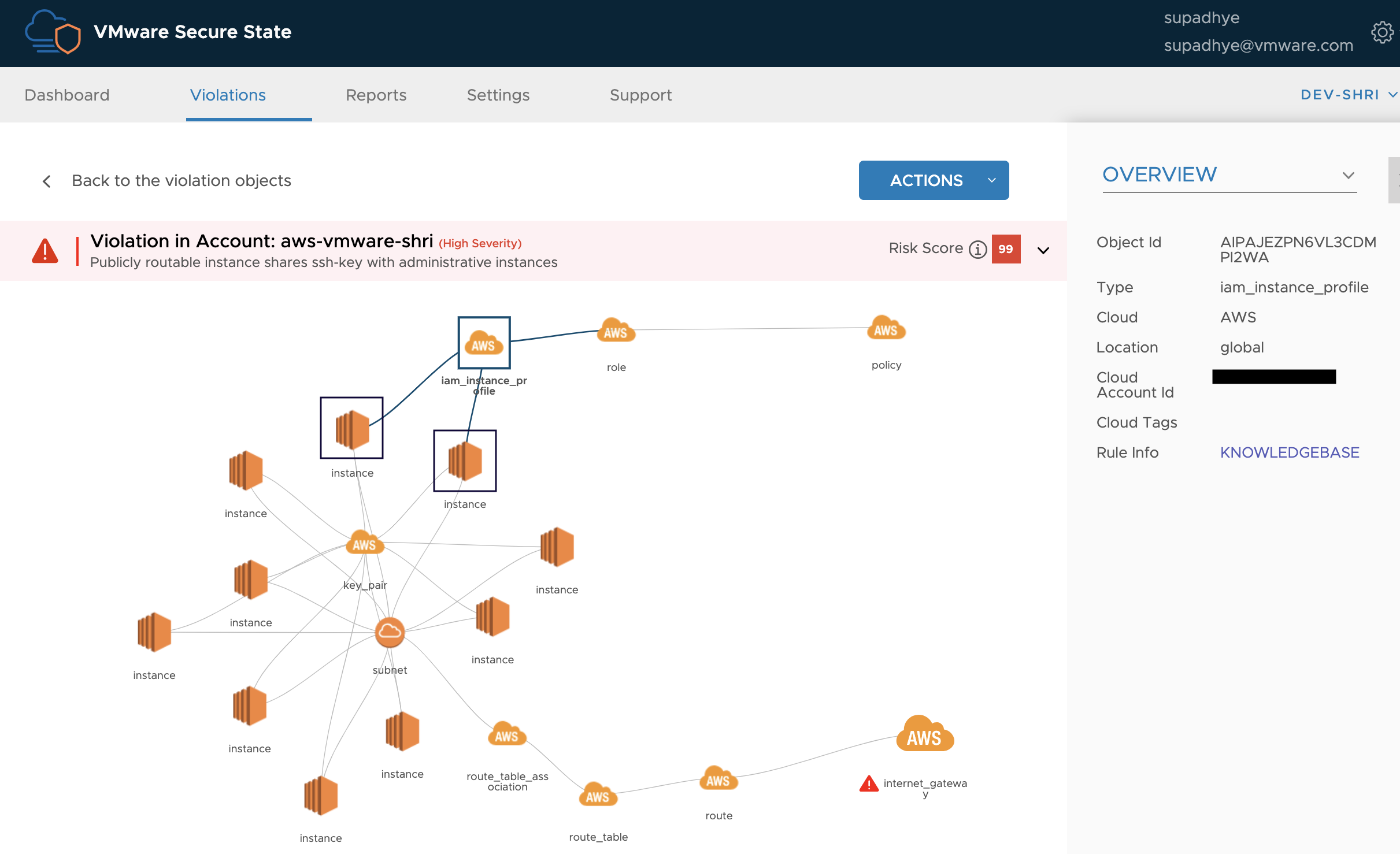

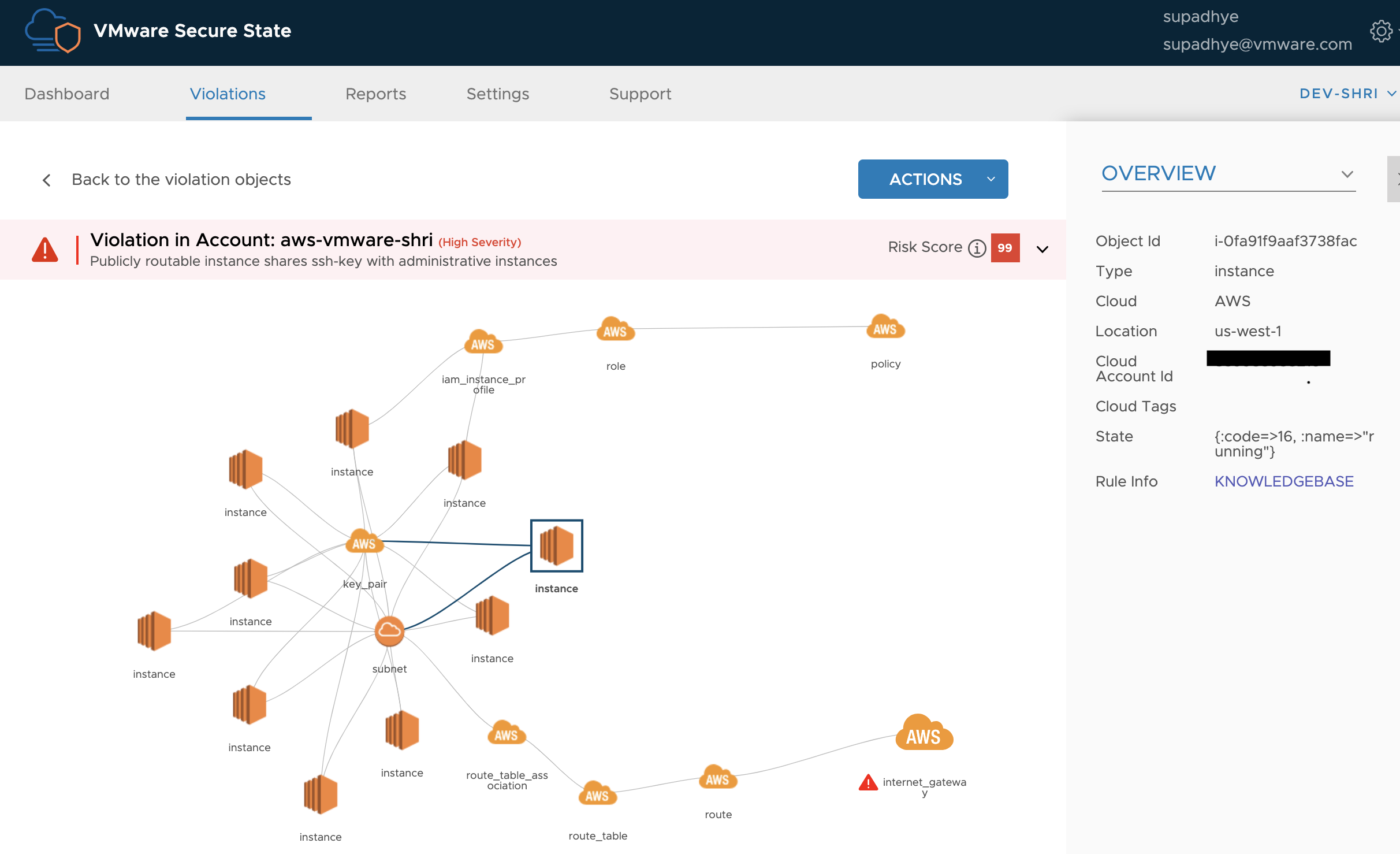

The “connected graph” in the image below shows the resources involved in the violation. The highlighted private instances have IAM Profile attached to them that has an Administrator Access policy (AWS policy) attached to it.

The highlighted instance is publicly accessible. This instance also shares the same SSH Key as the 2 instances with Admin Policy attached to them.

Since these instances share SSH-KEY, the only corrective action possible is to re-deploy them with new and distinct ssh-key pairs (BEST PRACTICE).

Pipeline 3 - Re-deployment using Terraform

Let’s look at the Re-deployment pipeline

This pipeline is triggered only if the output of previous pipeline is set to “True”. This maps to the “Remediate” step of the Response Process.

The Re-deploy pipeline is almost similar to deploy pipeline except for one change. The SSH-Key pair name(s) that are passed as variables to Terraform are distinct to fix the violation detected in previous step.

It’s important to note that this process may need an approval step where someone from SecOps and/or DevOps team should evaluate before any modifications are made to the production environment. You may add another step to this stage where the DevOps engineer can either provide you updated Key Pair names or push a new “.tfvars” file to the repo, which can trigger the deployment.

In such scenarios, you may have to trigger this pipeline manually.

You can also write your script to trigger re-deployment / modification pipeline based on the “Risk Score”, if supported by the tool of your choice (VMware Secure State provides Risk Scores). If the Risk score is high, then it’s always a better option to take some automated action on it. For ex: your DB instance is exposed has public access.

A successful execution of this pipeline will result in termination of instances with incorrect (shared) ssh-key and re-instantiating them with new keys.

Pipeline 4 - Destroy/Terminate Infrastructure using Terraform

The last pipeline is Destroy pipeline which destroys the infra. This pipeline fetches the ".tfstate” file from S3 and then executes the command. You can look at the Jenkinsfile in the repo for all the details on various stages.

Conclusion

Risks in Public Cloud can be classified into these groups:

a. Network Risks - Firewall, DDoS, Intrusion

b. Compliance and Governance Risks - Related to standards like PCI, HIPPA and Identity Access Mgmt

c. Application Risks - Related to Host Vulnerabilities

Each of these is critical to managing security in Multi-Cloud Environment. The principles of DevOps can be extended to DevSecOps by leveraging existing tools like Jenkins and Terraform to manage risks associated with compliance and governance.