VMware Code Stream vs AWS CodePipeline

In my last article, I went through the Good and the Bad of AWS CodePipeline.

There are lots of great things to be said for it. Unfortunately, I found it quite limiting in the case of trying to non-AWS capabilities. I.e. if I wanted to deploy to:

- GKE

- On-Prem

- Cloud PKS

- Etc.

You are able to deploy to other locations, but need to use Lambda functions. However, you could also use 3rd party pipeline tools such as:

- Cloudbees / Jenkins

- GitLab

- Armory.io / Spinnaker

- VMware Code Stream

All of those pipeline tools can provide the flexibility to deploy to any endpoint without being limited within the AWS ecosystem. Today we will showcase how VMware’s Code Stream provides this flexibility. We’ll compare AWS CodePipeline with VMware Code Stream.

Comparison Overview

Let me start by making a clarification. CI/CD is a process. A pipeline tool helps manage the CI/CD process, but doesn’t necessarily have to be a CI/CD tool. First lets understand the slight nuances between Continuous Integration, Continuous Delivery, and Continuous Deployment. Atlassian has a great blog explaining Continuous Integration vs Continuous Deliver vs Continuous Deployment:

To summarize:

CI vs CD vs CD from Atlassian

Continuous deployment results in all changes going through a pipeline and being deployed in production automatically. Continuous delivery allows the user to hold deployment if it’s not viable for whatever reason (business or technical).

Let’s get into a very brief overview comparison of these two products. They are both very similar in a lot of ways. To start with, they are both the pipeline tool pieces of their respective larger solutions. For VMware, Code Stream is part of Cloud Automation Services. In AWS, CodePipeline is part of the CodeSuite inside Developer Tools. Both products are designed to work with a multitude of repositories, build providers, and deployment technologies. Though, as I went over in my last blog posted above, CodePipeline can be a bit limiting if your endpoint doesn’t exist inside of the AWS ecosystem. Code Stream, while it is a VMware product, is very multi-cloud focused. This way, no matter where your business chooses to deploy, the solution will work for you. In order to compare AWS CodePipline, I will cover the following functional areas vs CodeStream.

- Visual Ease of Use

- Pipeline Creation

- Pipeline Execution

- API Extensibility

- External API Integrations

- JIRA Integration

- CI and CD

Product Comparisons

Visual Ease of Use

Let’s start off with a visual first impression…

Main UI for Code Stream and CodePipeline

One thing you’ll notice immediately is that CodePipeline integrates the entire CodeSuite menu into one UI. This makes it extremely easy to switch back and forth and navigate the full solution. Cloud Services Platform (CSP) from VMware does make it easy to switch between services, but it is not laid out as neatly integrated as CodeSuite.

Pipeline Creation

Pipeline creation is pretty straightforward in both of the products. Both Code Stream and CodePipeline offer the ability to Import a pipeline. Code Stream actually allows you to do Pipeline-as-Code using YAML. CodePipeline is a bit more involved, as you have to use a CloudFormation template.

Importing a Pipeline into Code Stream

A big difference between the two products comes up when you start to build a pipeline. CodePipeline only operates in Wizard mode to create a new pipeline. Walking you step by step through the setup. Code Stream allows you to create a pipeline in two ways. You can use the SmartTemplate wizard to walk you through it, or you can simply open up a blank canvas.

Blank Canvas UI for Code Stream

This feature is where I feel that Code Stream really excels in UX, when given to someone who may be new to pipeline tools. It allows you to very clearly visualize what the pipeline does. You create stages, then click and drag tasks from the left side. Once you add a task, you simply go to the properties to configure that task. Very simple to pick up and learn.

To be fair, CodePipeline also has a similar feature to it, but only when you have a completed pipeline that you want to edit. This allows you to visualize the pipeline, and manipulate stages and tasks. Just doesn’t help so much with the creation.

Edit Pipeline UI in CodePipeline

Pipeline Execution

Pipeline Execution is also very straight forward in both products. You can go in and manually execute any pipeline at any time. But, that defeats the purpose of automating releases. The best way to execute a pipeline is from an automated event. This generally comes in the form of a trigger that automatically executes the pipeline when there is a commit or a push to the repository being used for the pipeline. AWS makes this painless by automatically creating the trigger when you set up the repository in the source stage. If you are using GitHub, when you create the source task, GitHub Webhook will be the default (recommended action). This will ask you to accept an app authorization betwen GitHub and AWS, and it does all the work for you.

GitHub Webhook Creation in CodePipeline

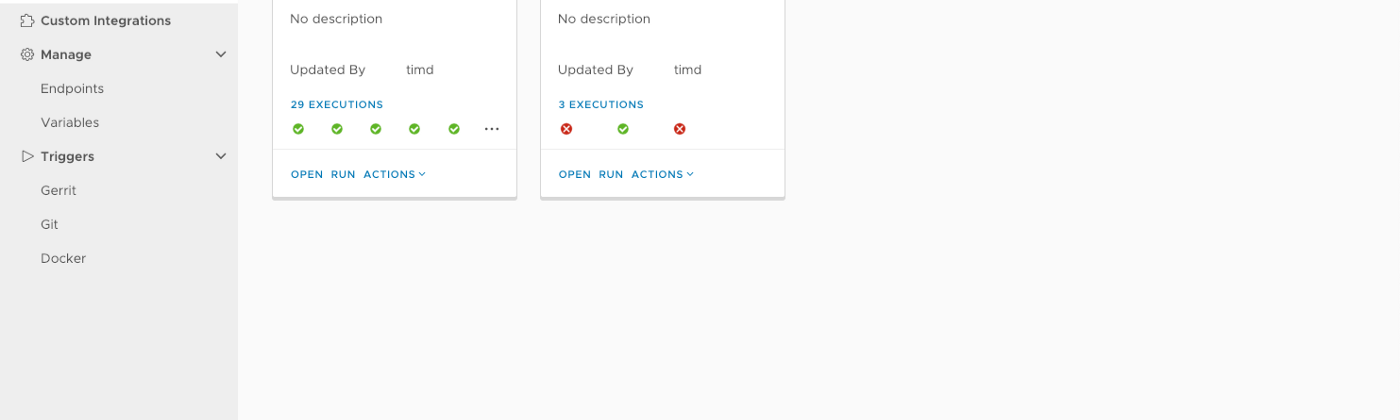

In Code Stream, you have to manually go through and set the triggers. This can be done through the main menu. You can trigger off Gerrit, Git, and Docker. So any push you do will automatically trigger your pipeline. When you configure the trigger, you have to assign it to a pipeline. So you’ll have a separate trigger for each pipeline. The webhook configuration is roughly the same. When you create the webhook, you give it your credentials, and Code Stream does the work for you. Just not automatically during pipeline creation.

GitHub Webhook Creation in Code Stream

API Extensibility

Both of these two solutions come in strong with an API for configuration, and management. Working towards true DevOps in your org means automation. Even down to automating the automation. For this, CodePipeline takes the lead. Not for extensibility, per se, but for API documentation alone. You can find the full swagger for the Code Stream and the API documentation for CodePipeline here:

External API Integrations

One thing where I feel Code Stream comes on top is ability to integrate a plethora of other solutions into the pipeline using REST. Code Stream can create a stage task for any REST call. You just fill out the call parameters, and you can fire it off right from the pipeline. I was unable to find a way to do this in CodePipeline. I’d imagine you could use a Lambda function from inside the pipeline to do this, but that isn’t as clean of a solution.

JIRA Integration

JIRA has become extremely important for many organizations these days. JIRA integration is almost a non-starter for some people when choosing new solutions. For this one, Code Stream clearly comes out on top. Unfortunately, I can’t find any reference to integrating JIRA with CodePipeline. This doesn’t mean it isn’t possible through some form or fashion. I just can’t see anywhere to do it in the tool, nor can I find any docs or write-ups on it. I’d imagine you’d do it in CodeBuild (upon failure), but again, can’t find it anywhere in the CodeSuite. With Code Stream, it is as easy as adding a JIRA endpoint into the configuration, then calling it to shoot off a ticket upon a failure from the pipeline.

CI

While both of the products let you do both CI and CD (and the other CD) in the pipeline, Code Stream is the only one of the two that does CI directly in the pipeline tool. You can configure a CI workspace with your build machine or your container image. Then you can set up a CI task directly in a pipeline stage and write out the commands you want to run on the build machine. This will also allow you to run the testing that you will need to approve the build for Delivery / Deployment.

CI Tasks in Code Stream

To complete CI tasks in CodePipeline, you’d simply call on CodeBuild or Jenkins from the build stage of the pipeline. Using CodeBuild from CodePipeline is very easy as all the CodeSuite stuff is so integrated. You can run the CodeBuild wizard directly from within the CodePipeline wizard, or the API.

CD

The story with CD is basically the same as CI with the two products. You can do many deploy functions from directly within Code Stream to many different types of endpoints, where CodePipeline simply makes a call to CodeDeploy or one of the other AWS services. One of the biggest notable differences is where you can deploy to natively, in terms of Kubernetes.

The Great Kubernetes Deployment Problem

This brings us to the problem I had with CodePipeline, that was easily solved with Code Stream. Deploying to Kubernetes is pretty difficult with CodePipeline. The choices for Deployment are great if you are 100% in the AWS ecosystem.

Deploy Providers

As you can see, Kubernetes isn’t even really an option. You can absolutely deploy to ECS. And this is great if you use ECS. Take a look at the process to deploy to Kubernetes

It involves essentially piping your output into Lambda to do the deployment. That is all well and good, and it works, but it is a pretty convoluted solution. Kubernetes has become the industry-standard for container management. Even in AWS. They have their own managed Kubernetes service. So why is it still so hard to deploy to it?

Code Stream answers this problem from a multi-cloud mindset. It doesn’t matter where your Kubernetes cluster is deployed, or how it’s managed. All you need is the clusters API URL and an authentication token.

You can see the full Kubernetes setup in my Code Stream / Cloud PKS blog:

Conclusion

As you can see, CodePipeline is absolutely superior in some aspects, such as UI integration to the greater solution. Though, in the one aspect that really mattered to me at the time, Code Stream was able to deliver the results in a much cleaner and easier fashion.