AWS CodePipeline: The Good and the Bad

I have been doing a lot of work in the realm of CI/CD lately. One of those tools has been CodePipeline. This is a very popular CI/CD service from AWS. In AWS’s own words, “AWS CodePipeline is a continuous integration and continuous delivery service for fast and reliable application and infrastructure updates. CodePipeline builds, tests, and deploys your code every time there is a code change, based on the release process models you define.”

Demo Pipeline

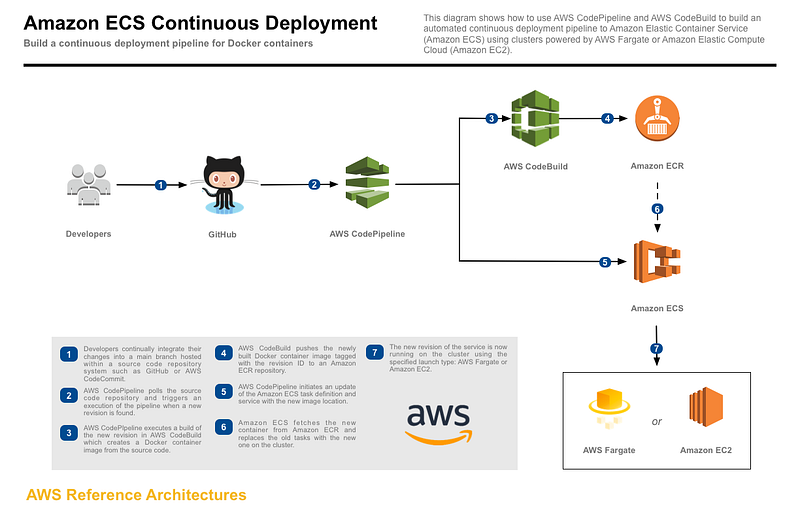

So for the sake of making sure I tested everything out, I deployed a demo pipeline. This pipeline is from an AWS labs demo that can be found here:

This demo uses CloudFormation templates to do most of the work for you. All you really have to do is fork the GitHub repo, and set up a personal access token.

The CodePipeline starts with a GitHub repo. It automatically creates a trigger action for any commits to the GitHub repository, so that you don’t have to automatically trigger the pipeline release. It then uses CodeBuild to do the docker builds into ECR. Once there, it uses Amazon ECS for the actual Container Deployments into Fargate or EC2. For this demo, I’m using Fargate.

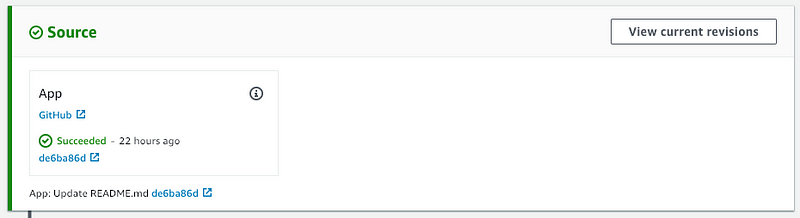

Source Stage

Source Stage Screenshot

Source Stage Screenshot

The Good

CodePipeline is a very easy service to get started with. It is part of the overall AWS CodeSuite solution. This suite includes CodeCommit, CodeBuild, CodeDeploy, and CodePipeline. The source stage of the pipeline has several great repository options.

Source Stage Options

As you can see, there are several great options for 100% AWS shops. CodeCommit, ECR, and S3 can all be used. But what about shops that use 3rd-party repositories? Well, there is good news here. GitHub, which is one of the most prominent source repository services, is fully supported. This makes it easy for shops with existing projects to get started with CodePipeline quickly.

The Bad

There is a flip side to everything I just said. While CodePipeline does have great options for source code, it is also limited. You’ll find that being the common theme here.

Some popular source control tools not supported by CodePipeline:

- BitBucket

- Team Foundation Server

- GitLab

- Gerrit

I’m sure you can find a way to script a replication from another 3rd party into one of the supported options, or just switch. But that can be a huge hurdle when evaluating and selecting a CI/CD solution.

Build Stage

Build Stage Screenshot

The Good AND The Bad

There is a lot of good here in the build stage. So much, that I am going to combine the good and the bad so that it doesn’t look like my sections have no substance. The bad part here is that the only 2 build providers available are CodeBuild and Jenkins.

Build Stage Options

The good part is that CodeBuild and Jenkins provide you a huge amount of options. Jenkins is very well known and used in the industry. So if you already have a Jenkins build server, and are using it actively, it is plug-and-play. If you go with CodeBuild, you’ll be offered a rich build environment. Build server choices are Windows and Ubuntu. And there are a plethora of runtimes available.

Code Build Options

As you can see, you can pretty much do what you wish in the build stage. They cater to a large crowd, so feel free to run wild.

Deploy Stage

Deploy Stage Screenshot

The Good

As with the continuing trend in this article, if you are a 100% AWS shop, your options are vast. There are several very robust deployment providers for your pipeline.

Deploy Stage Options

CloudFormation, CodeDeploy, Beanstalk, ECS, etc. All of these are great services provided by AWS. With CodeDeploy, you could drop your build into Lambda for immediate execution. If it’s a web app you’re deploying, then Elastic Beanstalk may be right up your alley. It makes it extremely easy to deploy, monitor, and scale your application. Our demo pipeline uses Amazon ECS as the deployment endpoint.

The Bad

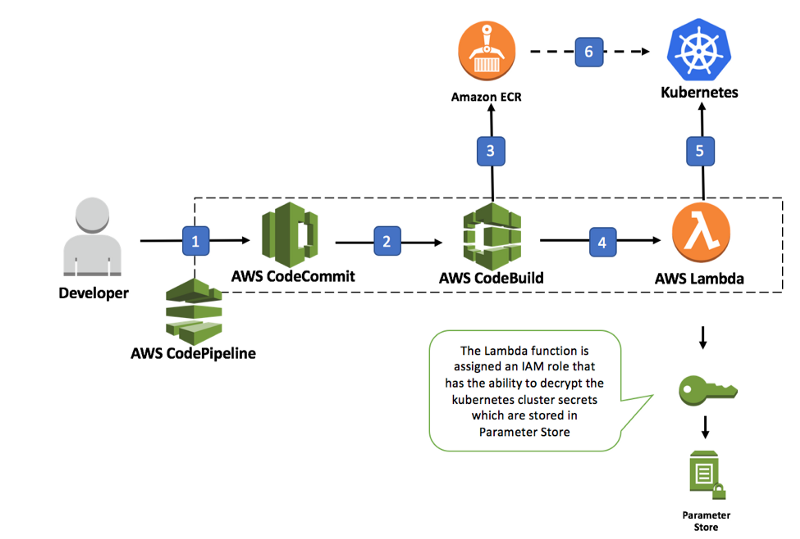

Did you notice that every single one of the deployment providers started with Amazon or AWS of some kind? Except for Alexa, that is, but it might as well. That is the really restricting part here. If your service doesn’t reside in AWS, or even if it is in AWS, but is a managed service from a 3rd party provider, you’re going to run into issues. Let’s say you want to use your own Kubernetes cluster that you built in an EC2 instance. You cannot simply give CodePipeline your clusters API address, and the security token for deployment. The accepted workaround would be to use CodeBuild into ECR, then invoking a Lambda function that then goes and deploys it into your cluster.

3rd Party Kubernetes Workflow

This is a very convoluted solution to a problem that I personally think there shouldn’t be. And honestly, this was the issue that made me start writing this article.

Let’s say you use a 3rd party managed Kubernetes solution, such as VMware Cloud PKS. This solution lives in AWS. The problem is that you don’t have access to the AWS IAM for the account, or the account itself, for that matter. The deployment solution I talked about above requires you to have IAM access, and access to the VPC that your Kubernetes cluster is deployed in. So, essentially, you cannot use 3rd party managed Kubernetes solutions with CodePipeline. Unless you want to write some very convoluted Lambda function to do it. But it honestly wouldn’t be worth the effort. Your best bet would be to decide if you want to change your CI/CD solution or your Kubernetes provider.

Conclusion

I just went on a small rant there about a very big issue I found with AWS CodePipeline, but that doesn’t change the fact that I think it is a fantastic service. If you are a 100% AWS shop, or you’re not using a 3rd party managed Kubernetes service, the entire CodeSuite from AWS is something you need to take a look at. It is just one of the many reasons that AWS has positioned itself so strongly in the Public Cloud space. Are there other solutions out there that can provide you with much more vendor-neutral choices endpoints? Absolutely! You can check out Spinnaker provided by Armory, Jenkins provided by Cloudbees, or even CodeStream by VMware which we will be talking about in the next blog.